Overview

Befriend is a conceptual AI-powered relationship assistant that helps users reflect on emotional patterns and navigate complex interpersonal interactions. Designed for academic exploration, the project investigates how large language models and contextual memory can provide guidance, clarity, and insight into real-world communication, supporting users in understanding their relationships over time.

How might AI help people navigate emotionally complex interactions and reflect on recurring relationship patterns?

Introduction

Modern connections are filled with moments that are emotionally nuanced but often hard to articulate. People commonly turn to friends, note-taking apps, or online advice for clarity, but these options are not always available or unbiased.

Befriend explores how an AI-powered assistant could fill this gap, helping users decode interactions, reflect on emotional patterns, and support their emotional lives around the clock. The central question guiding this work was: How might AI help people navigate their real-life relationships?

To explore this, I analyzed how people currently seek clarity and emotional support, identified gaps in existing tools, and experimented with AI-generated personas to simulate real-world emotional contexts, extending the reach of traditional UX research.

Research

To understand how people seek clarity and reassurance in relationships, I conducted 5 semi-structured interviews with users of dating apps who already leverage AI in their communication workflows. Participants described using AI to draft replies, refine tone, and navigate ambiguous interactions.

The interviews surfaced recurring emotional challenges, decision-making behaviors, and trust considerations. Users expressed gaps in long-term context, continuity of emotional insights, and reflective support, pain points that existing tools failed to address.

AI Persona - Esther

To synthesize insights from user interviews, I created an AI persona named Esther. Modeled as a college student navigating dating apps and social interactions, Esther captured the emotional challenges and decision-making patterns uncovered in research.

Esther was used as a lens to test scenarios, explore recurring patterns, and validate how users might interact with an AI assistant for reflection and guidance. By grounding the persona in real user data, I could pressure-test ideas before moving into more detailed interaction design.

Esther persona - a research-grounded model for emotional needs, habits, and decision-making patterns

Ideation

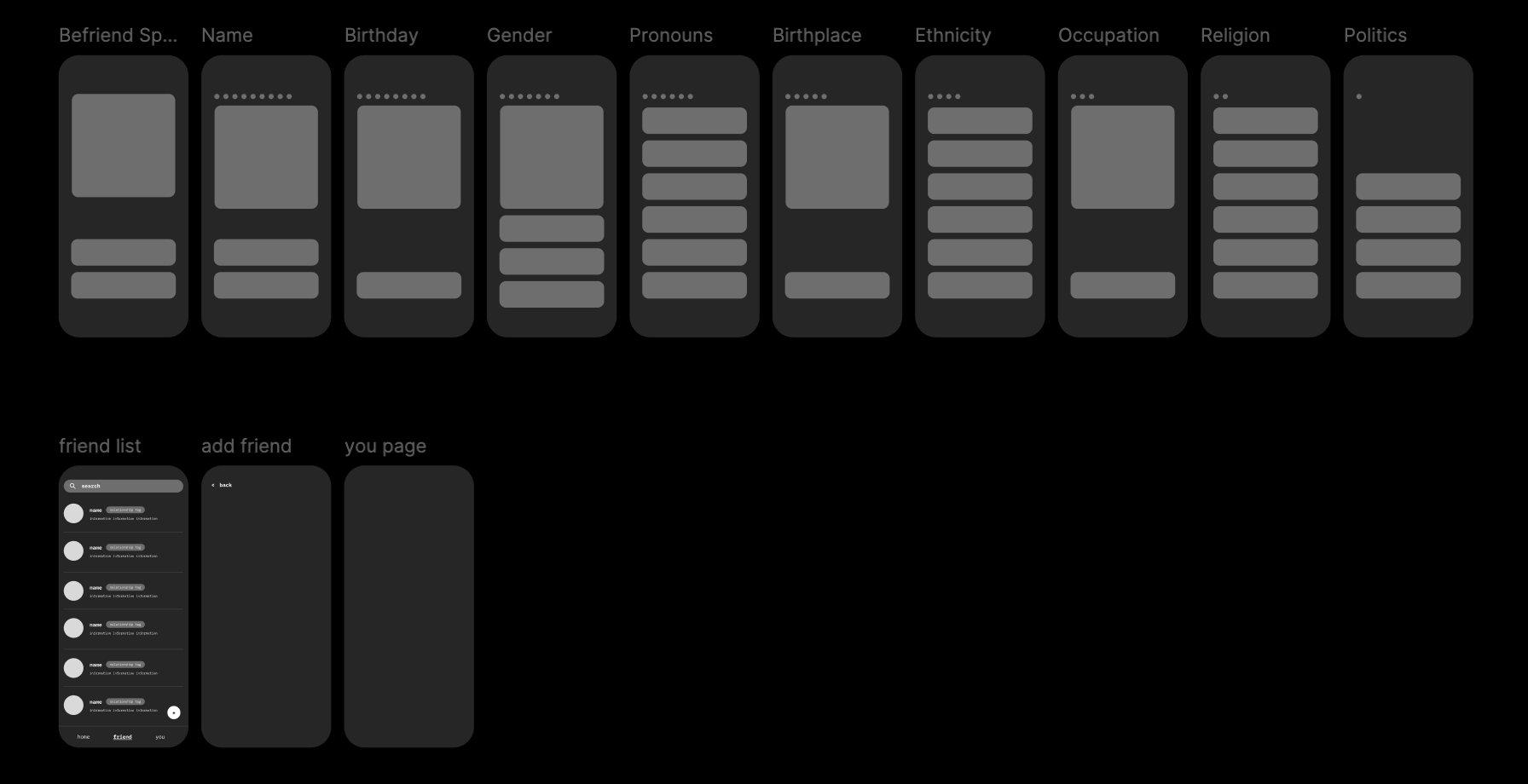

The ideation phase used AI as a speed multiplier for generating early wireframes and exploring multiple structural directions quickly. Tools like Gemini, Lovable, and Figma Make helped me produce rough interface concepts, screen layouts, and flow variations in parallel, which made it easier to compare approaches without spending too much time polishing any one direction too early.

From there, I manually edited and refined the outputs in Figma to shape the hierarchy, information flow, and overall structure of the app. Rather than treating AI outputs as finished design, I used them as a starting point to clarify what should feel primary, what should stay lightweight, and how the experience should unfold across reflection, memory, and guidance.

Final Design

The final design demonstrates a progression of user experience over time, illustrating how the AI supports emotional reflection and relationship navigation:

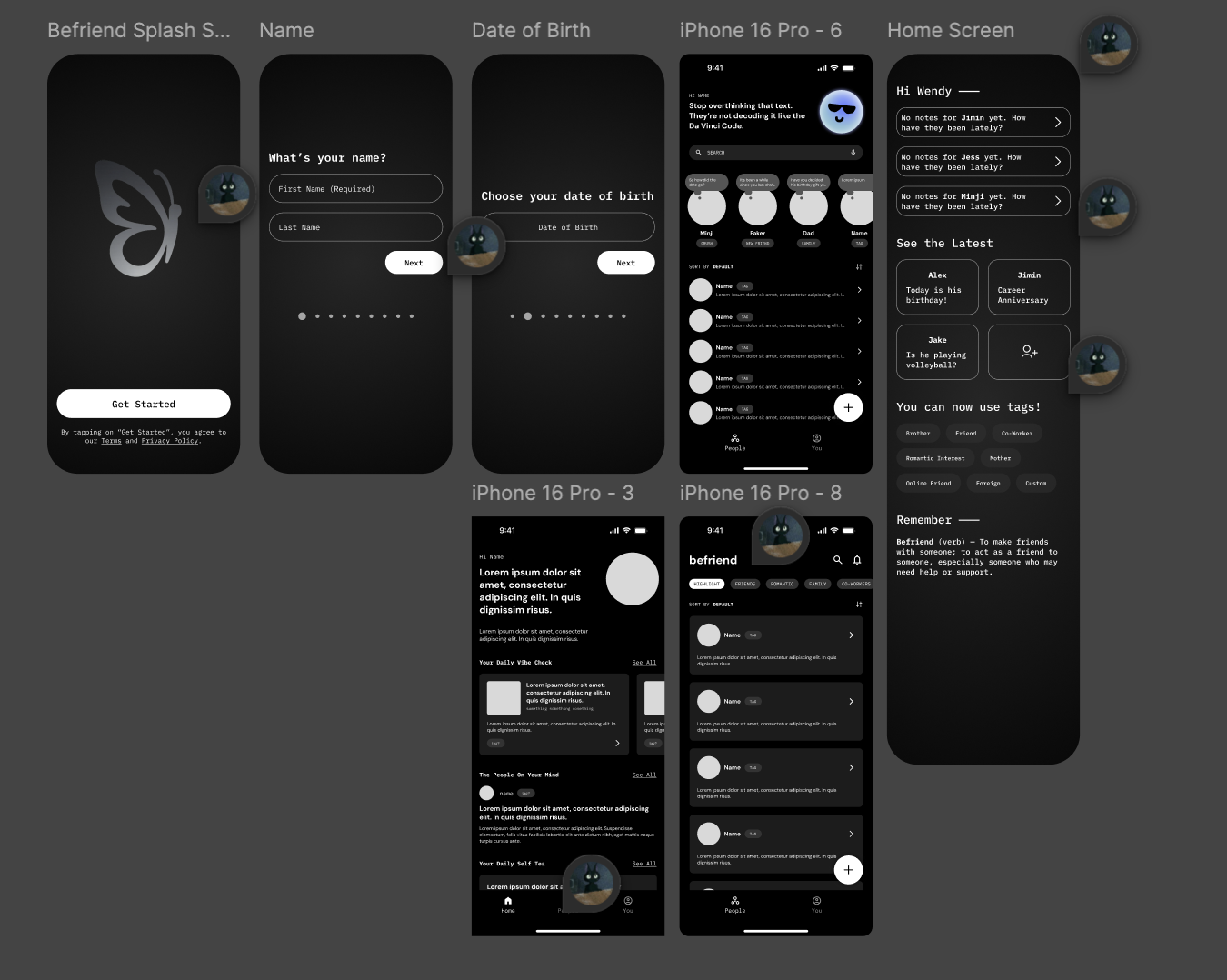

Day 1 - Onboarding

The first-time user flow guides users through initial setup, including identifying the first person they want to 'befriend' and setting baseline communication preferences. This establishes the context for AI-assisted reflection and interaction.

Day 1 - onboarding establishes the first relationship context and baseline preferences

Day 7 - Pattern Recognition

By the end of the first week, users begin revisiting interactions, reflecting on recent experiences, and recognizing emerging patterns. The AI surfaces contextual insights, helping users notice subtle emotional dynamics in their interactions.

Day 7 - recurring signals begin to surface through reflection and memory

Day 30 - Personalized Reflection

After a month of consistent usage, the AI has established sufficient context to provide deeper, personalized reflections. Users receive insights into recurring behaviors, communication habits, and opportunities for growth in their relationships, encouraging intentional and emotionally aware decision-making.

Day 30 - the system delivers more personalized reflection based on accumulated context

Role & Tools

This project was completed as a team of two designers. I focused on shaping the overall product direction, building the interaction structure, and translating the research into the core reflection, memory, and guidance flows. My teammate concentrated more on supporting visual development, exploratory concepts, and refining parts of the interface system as the product direction became clearer.

Together, we used Figma, Adobe CC, Miro, Lovable, GPT-4, and Gemini to move quickly from research to concept development and then into more resolved interaction design.

Next Steps

If developed further, the next phase would focus on improving memory transparency, giving users clearer control over what the assistant remembers and why specific reflections are surfaced over time.

I would also explore ways to make emotional guidance feel more grounded and less generic, including richer reflection prompts, more nuanced tone controls, and stronger safeguards around sensitive interpersonal situations.

Longer term, the product could evolve from a reactive advice tool into a more intentional reflective companion, helping users build healthier communication habits through repeated use rather than one-off moments of support.